Building an AI Data Center at Home

Home labs are essentially computers which serve applications for personal use. This can include hosting servers for video games, running AI models, serving as a replacement for Google Photos (and Google Drive more broadly), among other internet connected services. I was interested in building a home lab (or home data center) for serveral self-hosted services:

- Immich: A replacement for Google Photos

- Jupyter Lab: A Python-focused data science IDE

- Ollama: Run and self host LLMs

- Open WebUI: A self-hosted UI for chatting with AI models

Note that the software setup for these services is documented in detail in a GitHub repository here and includes Dockerfiles and setup instructions.

But before we can host these services, we need to build a computer!

Building the Machine

You don’t need much to build a home lab. In fact, any old laptop, desktop, or Rasberry Pi can be used to host basic services. However, for my purposes, I wanted to have a little more horsepower. I don’t need anything top of the line, but a solid mid-range system with a decent GPU (at least 12GB of VRAM) is necessary for my intended services.

Here is what I bought:

| Component | Spec |

|---|---|

| Case | be quiet! Base 600 |

| PSU | be quiet! Pure Power 13M 750W |

| CPU Cooler | be quiet! Pure Rock 3 |

| Motherboard | Asus B650E MAX WIFI |

| CPU | AMD Ryzen 5 7600X |

| RAM | G.Skill Flare X5 DDR5 32GB (2x 16GB) |

| Boot Drive | Patriot Memory P320 256GB |

| RAID Drives | 4x 1TB Samsung 5600 RPM Drives |

| GPU | MSI GeForce RTX 3060 12GB |

You can see the build system internals below:

Basic Setup

Now we need to set up the system. First, I decided to use Ubuntu 24 LTS

as my host OS. Note that LTS means long-term-stable and is well suited

for long running and stable use. Next, I install the Nvidia drivers for my

GPU in addition to some other utilities like curl and git.

From here, I install Tailscale (or as I like to call it, pure magic). Tailscale is nothing short of an incredible service. In short, you add devices to something called your tailnet. This is a network of devices (including iPhones, laptops, and of course servers) which can talk to each other privately from anywhere in the world. Tailscale is based on the modern WireGuard protocol.

Once you add a few devices to your tailnet, you can reference them using

a nicename instead of an IP address. For example, the server is known

as treehouse. If I host a service on the server on say port 8080,

I can simply visit http://treehouse:8080 from any other device on my

tailnet. Amazing!

RAID

I also decided to use a RAID 6 array for long term backup. This uses the 4 1TB drives mentioned in the prior section. If you don’t know, a redundant array of independent drives (RAID) is a system which has fault tolerant storage. RAID 6 (as opposed to other RAID configurations like RAID 5) needs 4 drives to operate.

Using 4 1TB drives, I get 2TB of storage. However, up to two of the four drives can fail at one time without any data loss! This makes RAID a powerful part of a self-hosted data strategy. Best practice is to create a different backup offsite (in case the entire server is lost or damaged) for truly redundant self-hosted storage.

Note that there is software RAID and hardware RAID. Hardware RAID

sits closer to the hardware which can enhace performance. However, I

am using software raid via mdadm. This is powerful because I could

install mdadm on a totally different system, attach as few as two

of the drives, and recover all my files from any system.

Docker Services

I debated whether I should use virtualization or Docker for my home

server. Ultimately, I chose Docker because it is comparatively

lightweight and allows me to access services using a simple SSH

connection to the host machine or via http://<server>:<port> for

any particular service.

Ollama

I also am utilizing Docker compose to orchestrate services. For example, I run an Ollama server (which makes it easy to self-host LLMs and access them from other Docker containers). We can see this service described below:

services:

ollama:

image: ollama/ollama

container_name: ollama

ports:

- "11434:11434"

volumes:

- ollama_models:/root/.ollama

environment:

- OLLAMA_CONTEXT_LENGTH=16384

restart: unless-stopped

deploy:

resources:

reservations:

devices:

- driver: nvidia

count: all

capabilities: [gpu]

networks:

- ollama_network

Open WebUI

The ollama service is hosted on port 11434. I also set some other

settings including the use of the GPU (needed to make models fast) and

increasing the context length. Other services can now leverage the ollama

service.

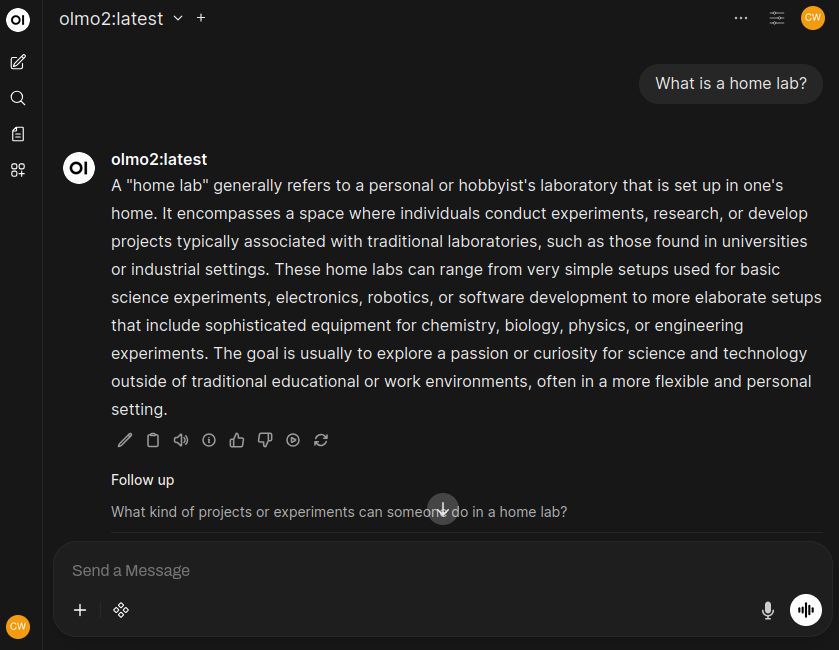

For example, the Open WebUI service can leverage Ollama as a model backend to pull and run models. Open WebUI can also perform powerful RAG applications. I set up the Ollama Programmable Search Engine or PSE with Open WebUI so I can ask models questions and they will search for me!

This is effectively a toally private and self hosted alternative to using ChatGPT or Claude (apart from the model performing an anonymous web search with Ollama PSE). If I turn off web search (which you can easily do on a search-by-search basis) this becomes a completely off-the-grid version of popular chatbots.

One other side note, I use the OLMo family of models (OLMo 2 in the image above) from Ai2. Unlike most open models (which are usually open weights), the models from Ai2 are fully open source. This includes the data, code, and model weights. This provides a level of transparency and ethics not seen from other models.

Jupyter Lab

I also enjoy doing Data Science work in Python. I considered using

VS Code with Microsoft Dev Containers, but I wanted something more

turnkey. Instead, I am using Jupyter Stacks. This is a collection

of Docker images which host a Jupyter Lab server and allow for a simple

development environment which I lightly customize with a few pip installs.

From any device on my tailnet, I can simply type http://<server>:8888 and

access a GPU enabled Jupyter Lab instance complete with CUDA support and

torch ready to use.

Immich

Immich is a self hosted alternative to Google Photos. It provided users with

the ability to store their digital media on a local server. From a web broswer

you would simply visit port 2283. However, with my tailnet, I can also

install the iPhone app and access my photos from anywhere in the world, safely

hosted at home.

This is where the RAID configuration from above comes in handy! It provides several TB of redundant photo storage accessible from the internet.

Benefits of Self Hosting

Self-hosting applications on a home server offers several benefits, including improved privacy, control over data, and customization options. By hosting apps on your own server, you retain ownership of your data rather than having it stored on remote servers controlled by third parties. This gives you greater control over how your information is handled and protects it from potential security breaches that might occur on external servers.

Furthermore, self-hosting allows you to tailor the functionality and user experience of the applications to better suit your needs, unhindered by pre-set configurations or limitations imposed by proprietary platforms.

Happy self hosting!